The highly dexterous robot hand can operate in the dark

[ad_1]

(Nanowerk News) Think about what you do with your hands when you are at home at night pressing a button on your TV remote control, or in a restaurant using all kinds of cutlery and glasses. All of these skills are based on touch, while you are watching a TV program or selecting something from a menu. Our hands and fingers are highly skilled mechanisms, and they are very sensitive to boot.

Robotics researchers have long tried to create “true” dexterity in robotic hands, but the goal has been elusive. Robotic grippers and suction cups can pick up and place items, but more agile tasks such as assembly, insertion, reorientation, packaging, etc. remain in the realm of human manipulation. However, driven by advances in sensing technology and machine learning techniques to process sensing data, the field of robot manipulation is changing very fast.

Very dexterous robotic hands even work in the dark

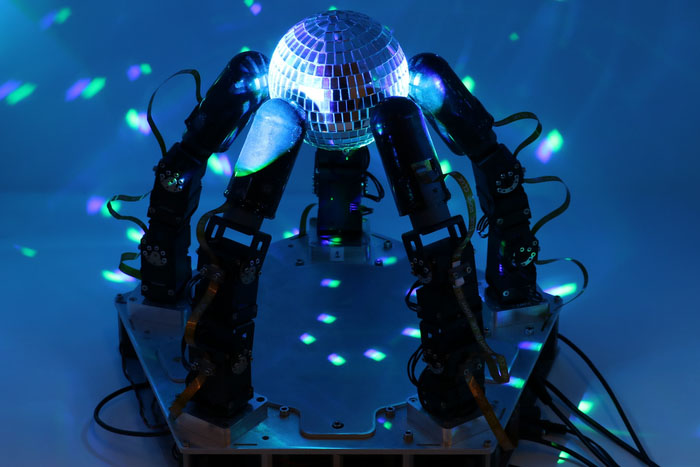

Researchers at Columbia Engineering have demonstrated a highly dexterous robot hand, one that combines an advanced sense of touch with motor learning algorithms to achieve high levels of dexterity.

As a demonstration of skill, the team chose a difficult manipulation task: executing arbitrarily large rotations of a gripped object with an uneven shape while always keeping the object in a stable, secure grip. This is a very difficult task because it requires the constant repositioning of some fingers, while the other fingers have to keep the object steady. The hand can not only perform this task, but also do so without any visual feedback, based solely on touch sensing.

In addition to a new level of dexterity, the hand works without an external camera, making it immune to lighting, occlusion or similar problems. And the fact that the hand doesn’t rely on vision to manipulate objects means it can do so in extremely difficult lighting conditions that would confuse vision-based algorithms–the hand can even operate in the dark.

“While our demonstration is a proof-of-concept task, intended to illustrate hands-on capabilities, we believe that this level of dexterity will open up entirely new applications for real-world manipulation of robots,” said Matei Ciocarlie, associate professor in the Department of Mechanical Engineering and Computer Science. . “Some of the faster uses may be in logistics and materials handling, helping to alleviate supply chain issues like those that have plagued our economy in recent years, and in advanced manufacturing and assembly in factories.”

(embed)https://www.youtube.com/watch?v=mYlc_OWgkyI(/embed)

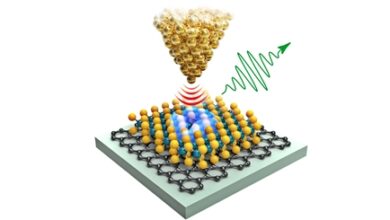

Utilizes an optically based tactile finger

In previous work, Ciocarlie’s group collaborated with Ioannis Kymissis, professor of electrical engineering, to develop a new generation of optically based tactile finger robots. It is the first robot finger to achieve contact localization with sub-millimeter precision while providing complete coverage of complex, multi-curved surfaces. Additionally, the compact packaging and low number of finger wires allow for easy integration into a complete robotic hand.

Teaching hands to perform complex tasks

For this new work, led by Ciocarlie doctoral researcher Gagan Khandate, the researchers designed and built a robotic hand with five fingers and 15 joints that actuate independently—each finger equipped with the team’s touch-sensing technology. The next step is to test the tactile hand’s ability to perform complex manipulation tasks. To do this, they use new methods for motor learning, or the robot’s ability to learn new physical tasks through practice. Specifically, they used a method called deep reinforcement learning, coupled with a new algorithm they developed for effective exploration of possible motor strategies.

The robot completes about a year of practice in just a few hours of real time

The input to the motor learning algorithm consists solely of the team’s tactile and proprioceptive data, without any vision. Using simulation as a training ground, the robot completes about a year of training in just a few hours of real time, thanks to modern physics simulators and highly parallel processors. The researchers then transferred the manipulation skills trained in these simulations into the hands of real robots, which were able to achieve the level of dexterity the team had come to expect.

Ciocarlie notes that “the ultimate goal for the field remains assistive robotics at home, the ultimate proving ground for real agility. In this study, we have shown that a robot hand can also be very dexterous based on touch sensing alone. Once we also add visual feedback to the mix along with touch, we hope to achieve more dexterity, and one day start to get closer to replicating the human hand.

The ultimate goal: to combine abstract intelligence with embodied intelligence

Ultimately, Ciocarlie observes, physical robots that are useful in the real world require abstract and semantic intelligence (to understand conceptually how the world works), and embodied intelligence (skills to physically interact with the world). Major language models such as OpenAI’s GPT-4 or Google’s PALM aim to provide the former, while dexterity in manipulation as achieved in this study represents complementary advances in the latter.

For example, when asked how to make a sandwich, ChatGPT will type in a step-by-step plan in response, but it takes an agile robot to take the plan and actually make the sandwich. By the same token, the researchers hope that physically skilled robots will be able to extract semantic intelligence out of the pure virtual world of the Internet, and put it to good use for real-world physical tasks, perhaps even in our homes.

This paper has been accepted for future publication Robotics: Science and Systems Conference (Daegu, Korea, July 10-14, 2023), and now available as precast.

[ad_2]

Source link